AI Architectures

A Comparison of AI Architectures: LLMs, LNNs, and Neuromorphic AI

1. Large Language Models (LLMs)

Example company: OpenAI

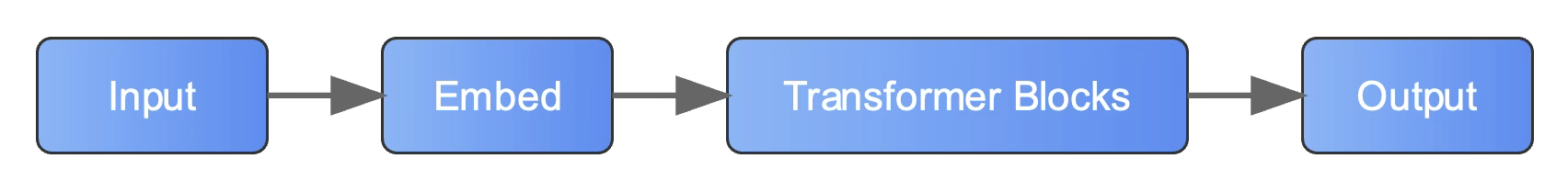

Core Concept: Utilizes transformer blocks and attention mechanisms to process sequential data, primarily text.

Core Concept: Utilizes transformer blocks and attention mechanisms to process sequential data, primarily text.

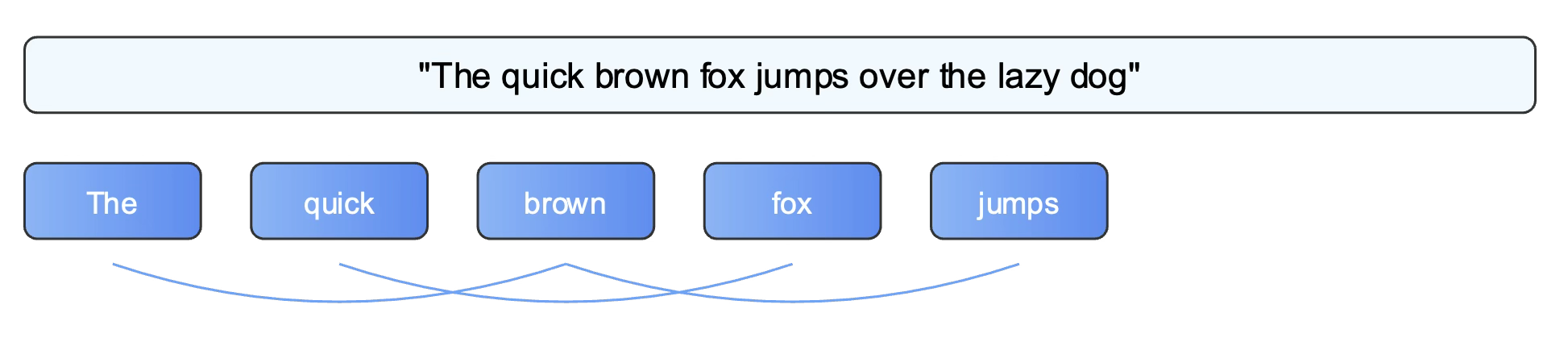

Processing Method: Processes information by breaking it down into sequential tokens and analyzing relationships between them.

Processing Method: Processes information by breaking it down into sequential tokens and analyzing relationships between them.

Key Characteristics

- Massive parameter count

- Sequential token processing

- Attention mechanisms

Applications

- Text generation

- Language translation

- Content creation

Advantages

- Strong language understanding capabilities

- Versatile across a wide range of tasks

- Benefits from regular improvements and scaling

Disadvantages

- Requires high computational resources

- High energy consumption

- Can be prone to generating incorrect or nonsensical information

2. Liquid Neural Networks (LNNs)

Example company: Liquid AI

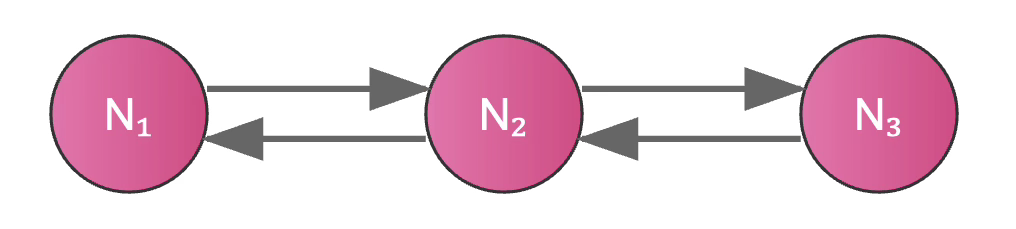

Core Concept: A type of recurrent neural network that uses differential equations to model continuous-time dynamics.

Core Concept: A type of recurrent neural network that uses differential equations to model continuous-time dynamics.

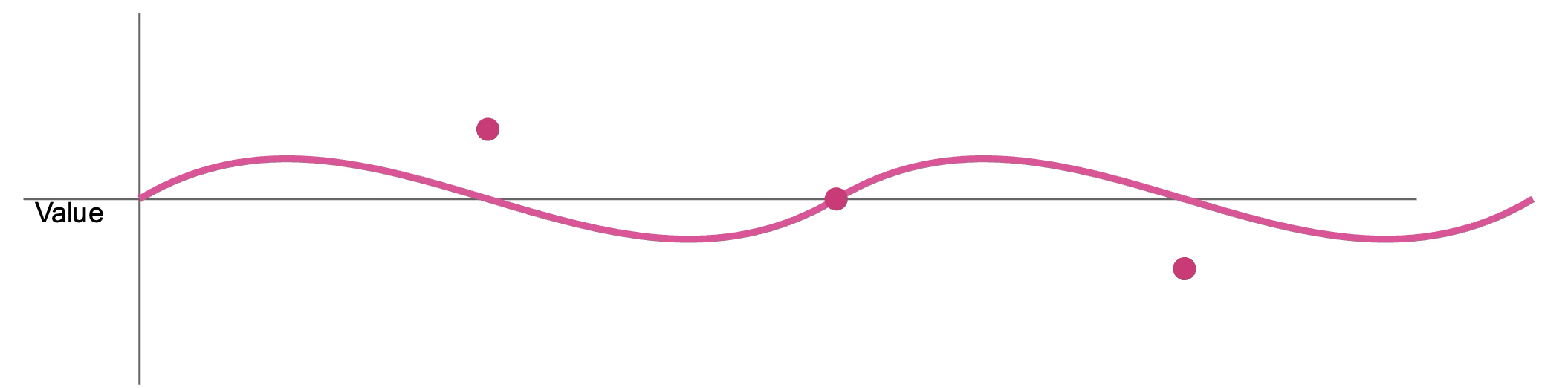

Processing Method: Handles data as a continuous flow, allowing it to adapt its behavior over time.

Processing Method: Handles data as a continuous flow, allowing it to adapt its behavior over time.

Key Characteristics

- Continuous-time dynamics

- Adaptive behavior

- Compact architecture

Applications

- Robotics control

- Time-series prediction

- Adaptive systems

Advantages

- Efficient use of parameters

- Capable of real-time adaptation

- Naturally handles temporal (time-based) data

Disadvantages

- Primarily limited to time-series data

- A newer and less established technology

- Can have a complex implementation

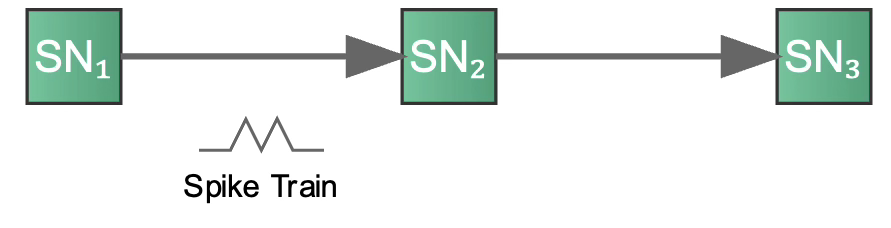

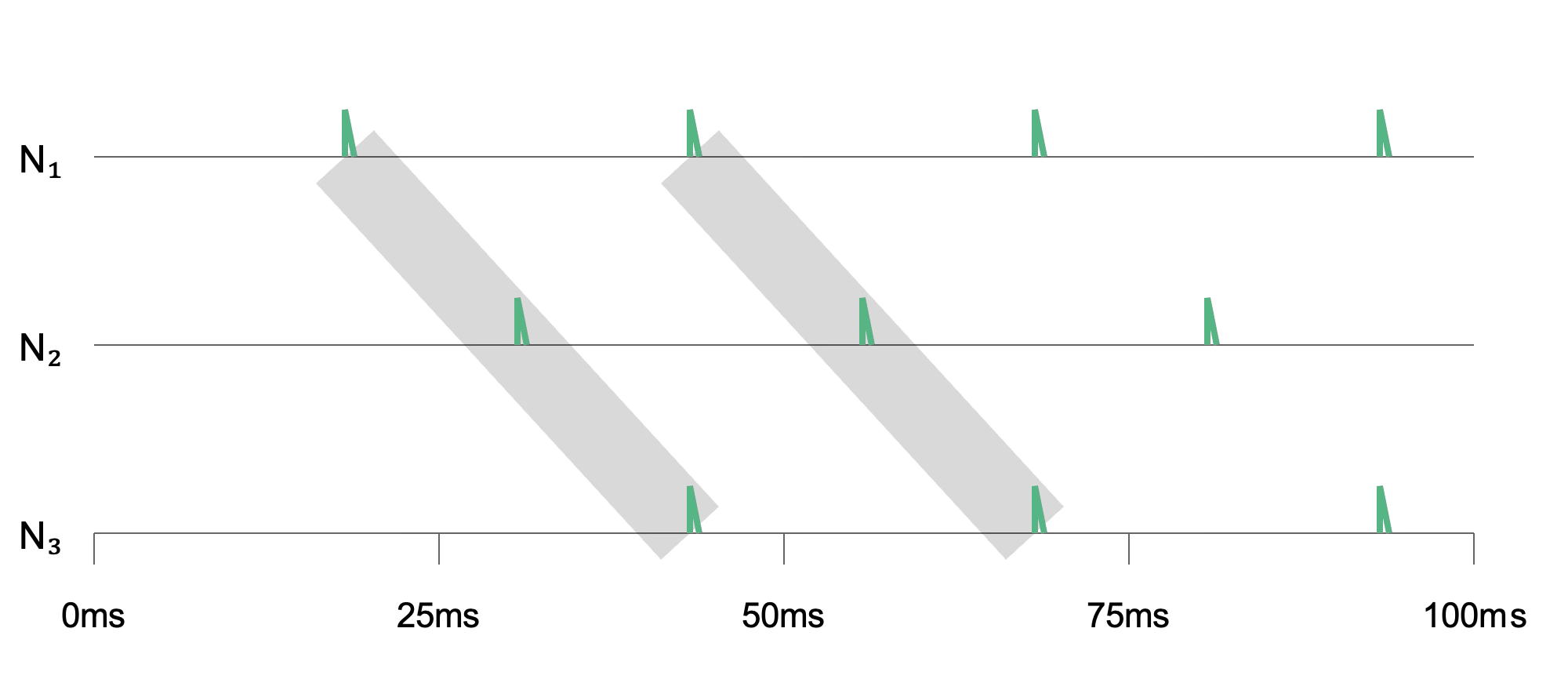

3. Neuromorphic AI

Example company: conscium AI

Core Concept: Inspired by the biological brain, this architecture uses networks of spiking neurons.

Core Concept: Inspired by the biological brain, this architecture uses networks of spiking neurons.

Processing Method: Operates based on discrete, event-driven “spikes”, processing information only when new data arrives.

Processing Method: Operates based on discrete, event-driven “spikes”, processing information only when new data arrives.

Key Characteristics

- Spike-based processing

- Event-driven computation

- Bio-inspired architecture

Applications

- Edge computing

- Sensor data processing

- Internet of Things (IoT) devices

Advantages

- Highly energy-efficient

- Fast, real-time processing capabilities

- Robust to noise in the input data

Disadvantages

- A limited ecosystem of tools and platforms

- Often requires specialized hardware for optimal performance

- Still an emerging technology

Comparative Analysis

| Architecture | Energy Efficiency | Maturity | Ease of Implementation | Adaptability |

|---|---|---|---|---|

| Large Language Models | Low | High | High | Moderate |

| Liquid Neural Networks | Moderate | Medium | Moderate | High |

| Neuromorphic AI | High | Low | Low | High |